Our Methodology

How SlopSort creates unbiased, AI-powered consensus rankings — no paid placements, no bias in the algorithm, just data.

Step 1: Query 20+ AI Models

We query 20+ leading AI models — including GPT-4o, Claude, Gemini, Llama, Perplexity, Mistral, Command R+, DeepSeek, and more — asking each one the same question independently. No AI sees what the others said.

Each AI returns a structured ranked list with product/place names, details, and reasoning. This independence is what makes the consensus meaningful — when 15 out of 20 AI models independently pick the same restaurant, that carries real signal.

For rankings that need extra rigor, we activate Deep Research Mode. This instructs each AI to first research across multiple real-world sources — Google, Yelp, TripAdvisor, Reddit, expert reviews, local blogs — before forming its ranked list. The AI synthesizes what actual humans are saying, not just its training data.

Step 2: Intelligent Deduplication

Different AIs often refer to the same thing by different names. Our multi-layer deduplication engine merges them intelligently so nothing gets double-counted — or incorrectly combined.

For products, we extract structured identity fields (brand, model, capacity) and merge entries only when all identity fields match exactly. "Sony WH-1000XM5" and "Sony 1000XM5" merge; "Sony WH-1000XM4" and "Sony WH-1000XM5" stay separate.

When structured fields are not available (like for restaurants), we use token-overlap similarity with an 85% threshold. "La Nova Pizzeria" merges with "La Nova" but "La Nova" stays separate from "La Noce."

Products with different capacities (3.7qt vs 5.8qt) or sizes are kept separate even if they share a brand and model name. The system detects capacity values and treats them as distinct products.

The engine adapts its matching strategy based on what is being ranked — products, places, or tips each have different similarity thresholds, conflict checks, and identity field configurations.

After deduplication, an auto-cleanup pass merges any remaining high-confidence duplicates (those with 80%+ similarity). Every merge is logged and can be reviewed or undone.

Step 3: The Scoring Algorithm

We combine the AI responses using a multi-factor scoring system designed to reward both high placement and broad agreement:

Higher rank = more points. If an AI submits a list of 10 items, the #1 pick earns 10 points, #2 earns 9, and so on. The formula adapts to each AI's actual list size.

points = (listSize - position) x weight

Products ranked in the top 5 by any AI receive a stacking bonus. Being ranked #1 carries a 2x multiplier, #2 is 1x, #3 is 0.6x, #4 is 0.35x, and #5 is 0.2x.

bonus = count x listSize x 0.5 x multiplier

Every product gets extra points for each AI that picked it. For a 10-item list, each AI appearance adds 4 bonus points. More AIs agreeing = bigger bonus.

bonus = appearances x ceil(listSize x 0.4)

If only one AI recommends a product and it was not that AI's #1 pick, the product's total score gets cut in half. This penalizes outlier picks that lack any consensus support.

if count=1 AND not #1 pick: score x 0.5

Total = Position Points + Top Pick Bonus + Consensus Bonus - Single Pick Penalty

Products must appear in at least 2 AI lists to be included in the final published ranking. Single-AI picks are tracked but filtered from the public consensus unless they were a #1 pick with strong enough score.

Step 4: Quality Control & Red Flags

AI models are not perfect. They sometimes recommend places that have permanently closed, products that do not exist, entries in the wrong category, or vague generic suggestions. Our quality control catches these:

Entries can be flagged as: Closed/Shut Down, Wrong Location, Does Not Exist, Duplicate Entry, Wrong Category, or Inaccurate. Flagged entries are excluded from scoring — the AI's contribution for that entry is completely skipped.

Entries with generic names (3 words or fewer, no model numbers) are automatically flagged as vague. The AI model that submitted them receives an accuracy penalty, discouraging lazy recommendations.

For place-type rankings (restaurants, bars, etc.), we cross-reference results with the Google Places API. This verifies addresses, operating status, ratings, and review counts — adding a real-world data layer on top of AI consensus.

When an AI submits a red-flagged entry, the flag counts against that AI's overall accuracy. This creates accountability — models that consistently recommend closed businesses or nonexistent products see their accuracy scores drop on our AI Leaderboard.

Step 5: AI Accuracy Tracking

We do not just use AI models — we measure how accurate they are. After each ranking is finalized, every AI model gets an accuracy score based on how well its individual picks matched the final consensus:

What percentage of the AI's picks made it into the final consensus ranking. An AI whose picks mostly appear in the consensus is considered more reliable.

How many of the AI's top 10 picks appear in the consensus top 10. Getting the top results right matters more than matching lower-ranked items.

Bonus points when an AI ranks something at exactly the same position as the final consensus. Predicting the exact order is hard, so exact matches are rewarded.

Each vague or generic entry submitted by the AI deducts points. This penalizes models that pad their lists with low-effort recommendations like "Local Brewery" instead of specific names.

You can see the full accuracy breakdown for every AI model on our AI Leaderboard. Over time, this data reveals which models excel at different types of rankings — some are better at tech products, others at restaurant picks or travel recommendations.

The Slop Score

Every ranking receives a Slop Score from 1 to 10. This is a per-project metric that measures how much sorting was required to produce the final consensus — the higher the score, the more slop was processed.

How much the AI models disagree on where to rank each product. Higher variance in rank positions means more sorting was needed to find consensus.

How many entries were duplicates that had to be merged. More merges means AIs used different names for the same thing — classic slop.

How many raw entries were processed down to the final ranked list. A large reduction means significant sorting effort.

What proportion of the final list was only recommended by a single AI model. More single-picks means less consensus support.

How many red flags were filed during sorting — closed businesses, nonexistent products, duplicates within the same AI list. More flags, more slop.

Slop Score = 1 + 9 x [(Disagreement x 25%) + (Merges x 20%) + (Raw÷Final x 20%) + (Single-Picks x 20%) + (Red Flags x 15%)]

A Slop Score of 1 means the data was clean with strong agreement. A score of 10 means maximum sorting effort was needed — heavy deduplication, high disagreement, and many red flags.

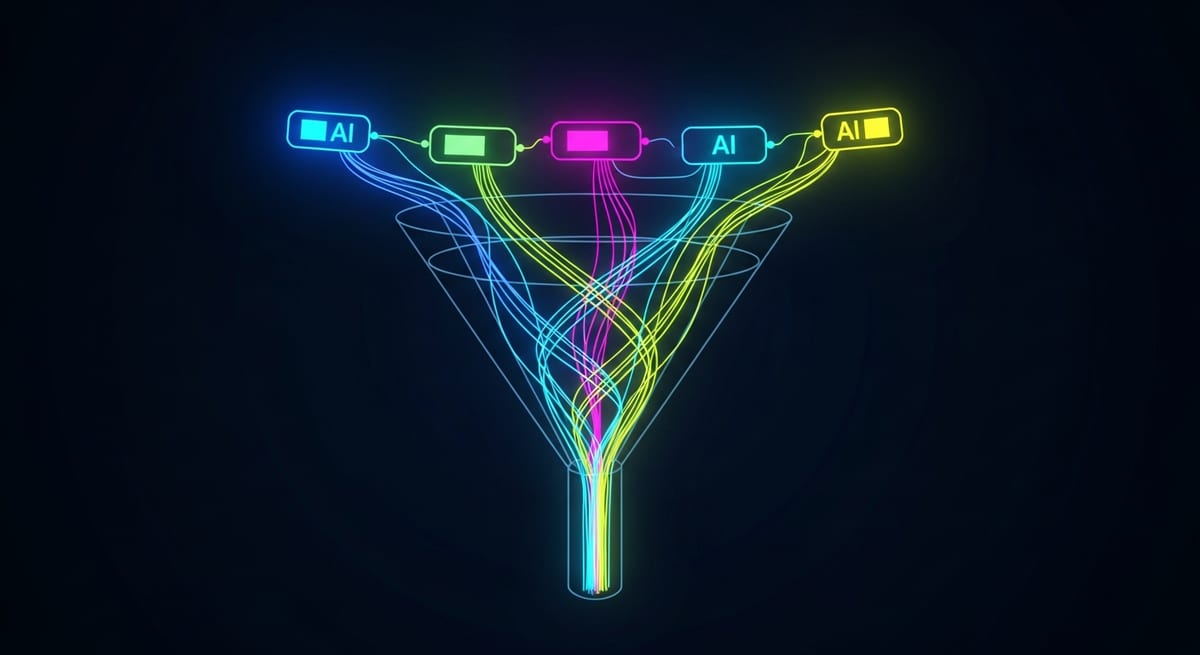

The Full Pipeline

Every ranking goes through this complete pipeline. The result: hundreds of raw AI entries are distilled down to a clean, verified consensus list where each item has been recommended by multiple independent AI sources and cross-checked against real-world data.

No Bias Guarantee

Our rankings are never influenced by affiliate relationships. While this site contains affiliate links to help support our work, the ranking algorithm has zero knowledge of which products have affiliate links and which do not.

The core ranking process is fully automated: AIs are queried, entries are deduplicated, red flags are removed, scores are calculated, and rankings are generated — all without human influence on the ordering. A human editor reviews each list before publishing for quality and accuracy, but never changes the AI-determined order. What you see is pure AI consensus.

Scoring Summary

The table below summarizes the key components of the SlopSort consensus scoring algorithm and the AI accuracy grading system.

| Component | What It Measures | Impact |

|---|---|---|

| Position Points | Where each AI ranked the item (higher rank = more points) | Base score — adapts to each AI's list size |

| Top 5 Pick Bonus | Items ranked in the top 5 by any AI model | Stacking multiplier — #1 picks receive the largest bonus |

| Consensus Bonus | How many AI models independently recommended the item | More AI agreement = higher bonus |

| Single-Pick Penalty | Items recommended by only one AI (and not as #1) | Score reduced by 50% — penalizes outlier picks |

| Red Flag Removal | Closed businesses, nonexistent products, wrong categories | Flagged entries excluded entirely from scoring |

| Google Verification | Cross-reference with Google Places for location rankings | Confirms address, operating status, ratings, review counts |

| Minimum Appearances | Items must appear on at least 2 AI lists | Ensures every published result has consensus support |

| Factor | Weight | Description |

|---|---|---|

| AI Disagreement | 25% | Average rank position variance across AI models for each product |

| Duplicate Merge Ratio | 20% | Proportion of raw entries that were merged as duplicates |

| Raw-to-Final Ratio | 20% | How many raw entries were reduced to the final ranked list |

| Single-Pick Prevalence | 20% | Proportion of final products only recommended by a single AI model |

| Red Flag Count | 15% | Number of red flags filed relative to AI model count |

| Metric | Weight / Value | Description |

|---|---|---|

| Consensus Rate | 60% | Percentage of the AI's picks that appear in the final consensus ranking |

| Top 10 Overlap | 30% | How many of the AI's top 10 appear in the consensus top 10 |

| Exact Rank Matches | +3 pts each | Bonus when the AI's rank matches the exact consensus position |

| Vague Entry Penalty | -2 pts each | Deduction for generic or low-effort recommendations |